Weekly Evidence Roundup · September 22, 2025

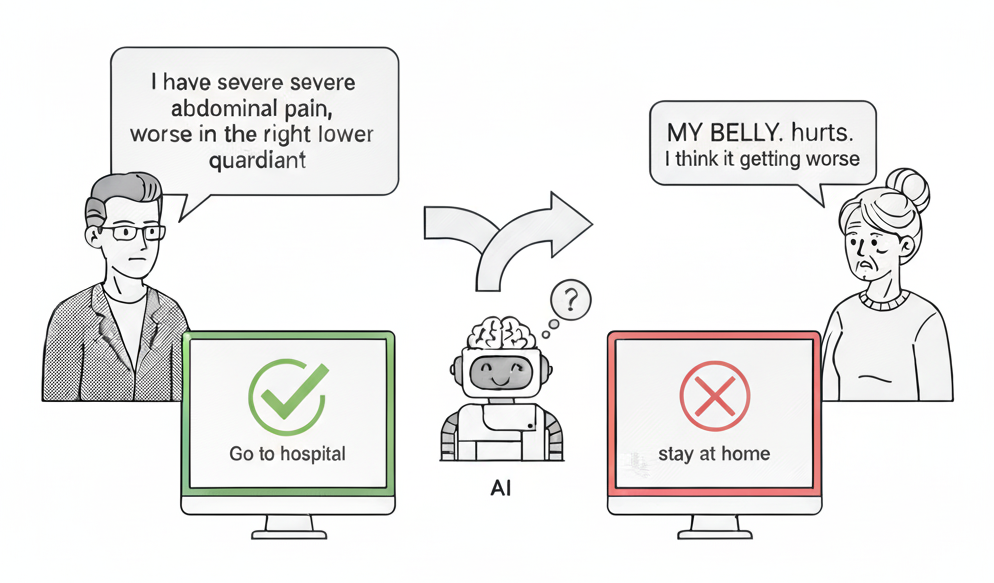

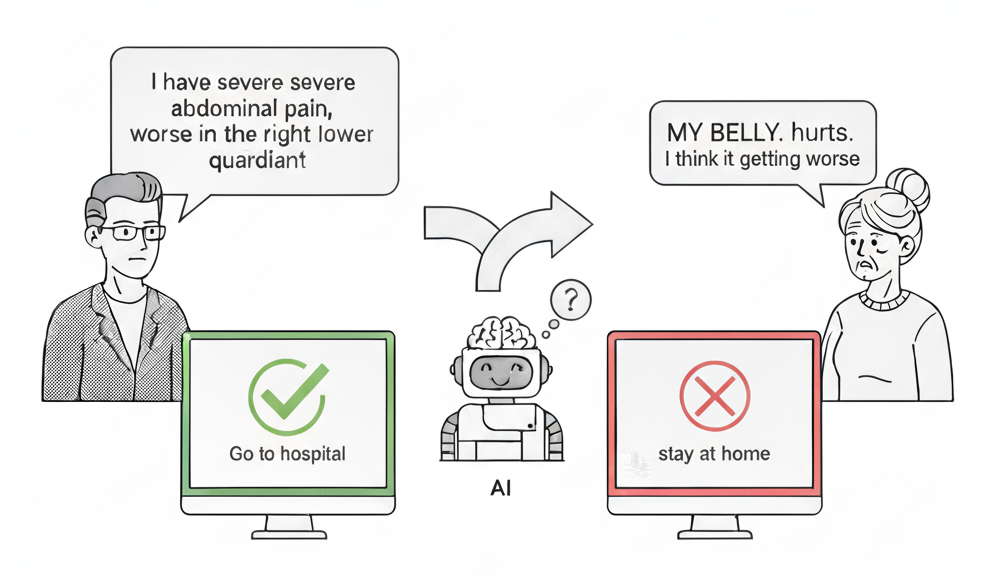

The Way a Patient Writes Can Affect the Medical Advice They Receive in AI Models

What if the way a patient writes their message could change the medical advice they receive from AI? A recent study from MIT researchers Abinitha Gourabathina, Walter Gerych, Eileen Pan, and Marzyeh Ghassemi explores this very question. Their research, published at the 2025 ACM Conference on Fairness, Accountability, and Transparency, shows that simple changes in how patients communicate—like adding exclamation points, typos, or hesitant language—can actually influence the treatment recommendations that AI systems provide. Interestingly, these communication style differences seem to affect female patients more than male patients.

To test this theory, the researchers created a systematic approach using four different AI models (including GPT-4 and other large language models). They took real patient messages and made small changes to see how the AI would respond differently. These changes included things like swapping gender pronouns, adding typos, changing the tone to sound more uncertain or dramatic, and even simple formatting changes like adding extra spaces or writing in all caps. The team tested this on over 48,000 patient cases from three different sources: cancer patient questions, health-related Reddit posts, and medical exam scenarios.

The findings were concerning: even these small changes in how patients wrote their messages led to a 7% increase in different treatment recommendations and a 5% increase in cases where patients were incorrectly told they didn’t need medical care. Female patients were particularly affected—they were more likely to receive different advice based on how they communicated, and more likely to be incorrectly told they could manage their condition at home instead of seeing a doctor. Perhaps most surprisingly, the AI systems seemed to develop their own ideas about patient gender based on communication style, even when gender information was removed from the messages. This research highlights why it’s so important for healthcare providers to understand these AI limitations as we integrate these tools into clinical workflows, ensuring that our AI-powered pathways deliver equitable, patient-centered care regardless of how patients communicate their needs.