Weekly Evidence Roundup · October 6, 2025

From Clinical Pathways to AI Agents: How Healthcare Providers Can Build Transparent AI That Learns From Their Expertise

What if healthcare providers could understand exactly how AI systems make clinical decisions, rather than being forced to trust mysterious 'black box' algorithms? A groundbreaking review published in Nature Reviews Bioengineering reveals the critical importance of transparency in medical A

What if healthcare providers could understand exactly how AI systems make clinical decisions, rather than being forced to trust mysterious “black box” algorithms? A groundbreaking review published in Nature Reviews Bioengineering reveals the critical importance of transparency in medical AI systems, while highlighting a fundamental truth: when interdisciplinary frontline providers are educated on creating and implementing AI-augmented clinical pathways, they maintain control over patient care, ensure safety, and prevent reliance on opaque solutions that could compromise clinical judgment.

The research, led by Chanwoo Kim, Soham U. Gadgil, and Su-In Lee, examines the current state of transparency in medical AI from training data to clinical deployment. Their findings underscore a crucial point that resonates deeply with the mission of educational platforms like CarePathIQ: achieving transparency requires a holistic approach that spans the entire development pipeline, and this transparency is only meaningful when healthcare providers have the knowledge and tools to understand and implement these systems effectively.

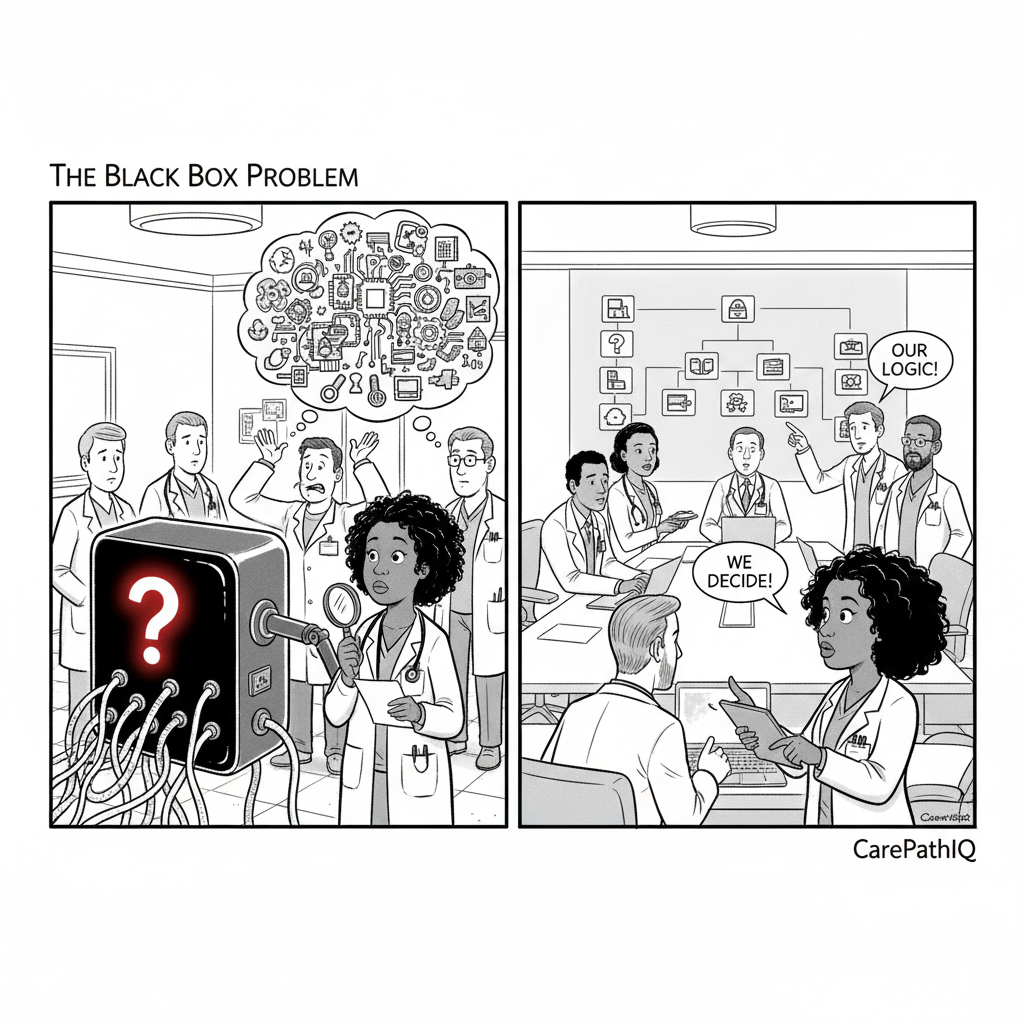

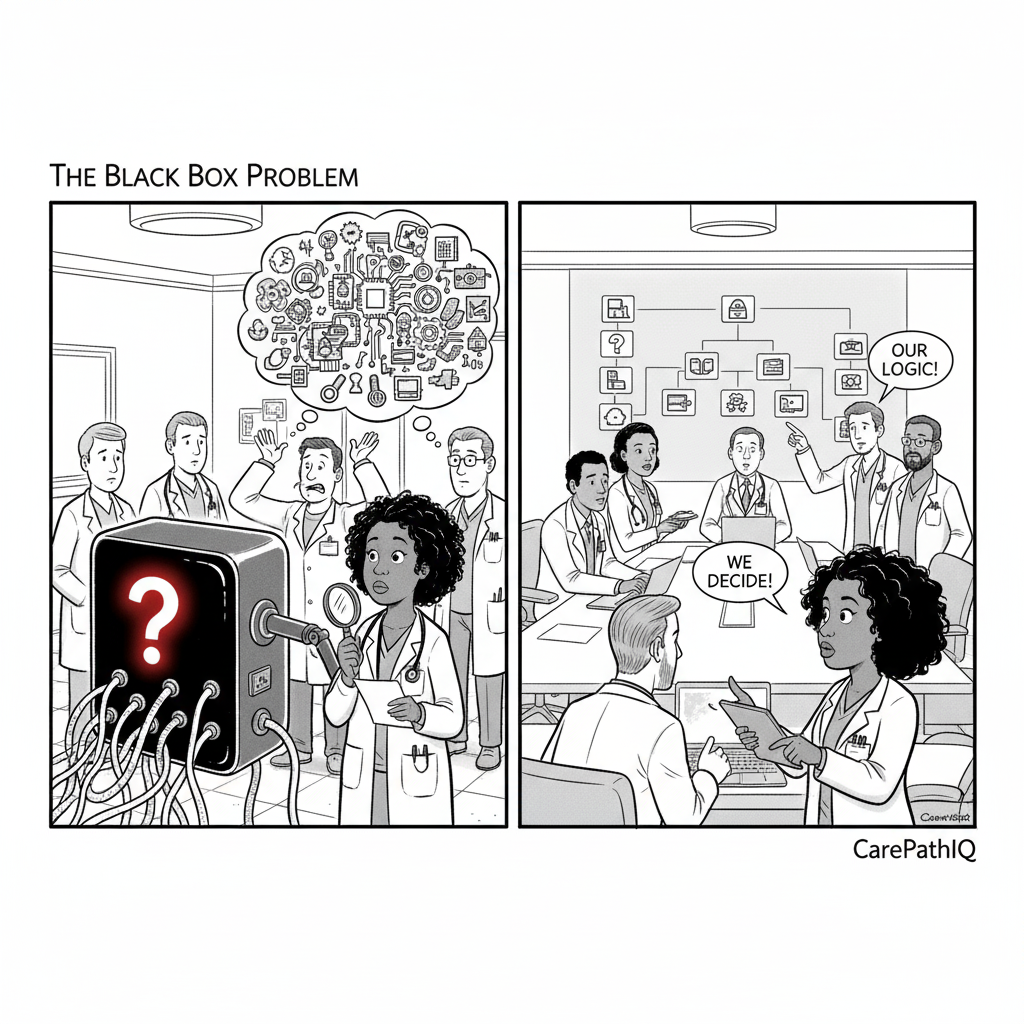

The Black Box Problem in Healthcare AI

The researchers identify a fundamental challenge in current medical AI deployment: many systems function as “black boxes,” making it nearly impossible for clinicians to interpret and verify their inner workings. This opacity creates significant risks in clinical settings where patient safety depends on understanding not just what an AI system recommends, but why it makes those recommendations.

The problem extends beyond individual patient interactions. When healthcare providers can’t understand how AI systems process data and make clinical predictions, they lose agency in the care process. They become dependent on systems they can’t fully trust or validate, potentially leading to either over-reliance on AI recommendations or complete rejection of potentially beneficial tools.

The Power of Provider Education

This is where the importance of educating interdisciplinary frontline providers becomes clear. When healthcare teams understand how to create and implement AI-augmented clinical pathways, they regain control over the technology rather than being controlled by it. This education enables providers to:

Maintain Clinical Autonomy: By understanding how AI systems work, providers can make informed decisions about when to follow AI recommendations and when to rely on their clinical judgment.

Ensure Patient Safety: Transparent AI systems allow providers to verify recommendations and catch potential errors before they impact patient care.

Prevent Black Box Dependencies: When providers understand the underlying logic of AI systems, they can identify when systems are making inappropriate recommendations or when they need to be updated or replaced.

CarePathIQ: Democratizing AI Education for Healthcare Providers

Educational platforms like CarePathIQ are addressing this critical need by providing free, comprehensive training on AI-augmented clinical pathways. CarePathIQ’s mission to democratize intelligent, patient-centered care pathways directly supports the transparency goals outlined in the research by ensuring that all healthcare providers, regardless of their institution’s resources, have access to the education needed to understand and implement AI systems effectively.

The platform’s interdisciplinary approach is particularly important because achieving transparency in medical AI requires collaboration across the entire healthcare team. When physicians, nurses, allied health professionals, and other frontline providers all understand how AI systems work, they can work together to ensure these systems are implemented safely and effectively.

From Clinical Pathways to Intelligent AI Systems

The research highlights several key techniques that promote explainability in medical AI, including feature attributions, concept-based explanations, and counterfactual explanations. However, these techniques are only valuable when healthcare providers have the knowledge to interpret and act on them.

While the research focuses on transparency in existing AI systems, there’s a crucial opportunity to build transparency into AI systems from the ground up: using AI-augmented clinical pathways to inform supervised non-parametric learning algorithms. This approach addresses the transparency challenges identified in the research by creating AI systems that are inherently explainable because they learn from structured clinical workflows.

When interdisciplinary healthcare providers create and implement AI-augmented clinical pathways, they’re not just following protocols—they’re generating rich, structured data about clinical decision-making processes. These pathways capture the nuanced reasoning, contextual factors, and interdisciplinary collaboration that goes into patient care. This data becomes the foundation for supervised non-parametric learning algorithms that can learn from real clinical workflows rather than abstract datasets.

The Pathway-to-Algorithm Pipeline: AI-augmented clinical pathways serve as training data for supervised non-parametric learning algorithms in several key ways:

-

Pattern Recognition: These algorithms can identify patterns in how different patient populations respond to various care approaches, learning from the structured pathways rather than isolated data points

-

Contextual Learning: Non-parametric approaches excel at capturing the complex, non-linear relationships between patient characteristics, clinical decisions, and outcomes that are embedded in well-designed clinical pathways

-

Adaptive Modeling: Unlike rigid parametric models, supervised non-parametric algorithms can adapt to new patient populations and clinical scenarios as pathways evolve

Supporting AI Agentic Workflows: The real power emerges when these algorithms support AI agentic workflows in clinical settings. AI agents—autonomous systems that can make decisions and take actions—can leverage the knowledge learned from clinical pathways to:

-

Autonomous Decision Support: AI agents can suggest care modifications based on pathway patterns, while remaining transparent about their reasoning

-

Workflow Optimization: Agents can identify bottlenecks or inefficiencies in clinical pathways and suggest improvements

-

Predictive Interventions: By learning from pathway data, agents can predict when patients might deviate from expected pathways and suggest preventive interventions

CarePathIQ’s educational approach addresses this gap by teaching providers not just about AI concepts, but about how to create pathways that can effectively inform these advanced AI systems. This includes understanding how to:

-

Design clinical pathways that capture meaningful decision-making patterns

-

Structure pathway data in ways that support non-parametric learning

-

Evaluate AI system recommendations in the context of individual patient needs

-

Integrate AI tools into existing clinical workflows without disrupting patient care

-

Monitor AI system performance and identify when systems need updates or modifications

-

Collaborate with interdisciplinary teams to ensure consistent and safe AI implementation

The Future of Transparent Healthcare AI

The research emphasizes the need for continuous monitoring and system updates to ensure AI systems remain reliable over time. This requires healthcare providers who understand both the technology and the clinical context in which it operates.

Educational platforms like CarePathIQ are preparing the healthcare workforce for this future by providing training that goes beyond basic AI literacy to include practical implementation skills. This education enables providers to be active participants in the development and deployment of AI systems rather than passive recipients of technology they don’t understand.

Building Trust Through Education and Transparency

The research identifies trust as a critical factor in successful AI deployment, noting that trust must be built among patients, providers, developers, and regulators. This trust can only be achieved when all stakeholders understand how AI systems work and can verify their safety and effectiveness.

CarePathIQ’s educational mission supports this trust-building process by ensuring that healthcare providers have the knowledge and skills needed to evaluate AI systems critically and implement them safely. When providers understand the technology they’re using, they can communicate more effectively with patients about AI-assisted care and make informed decisions about when and how to use these tools.

The Path Forward: Education as Empowerment

The research concludes by emphasizing the need to democratize access to AI tools and integrate transparency features into clinical workflows. This vision aligns perfectly with CarePathIQ’s mission to democratize intelligent care pathways and provide accessible education to all healthcare providers.

As the healthcare industry continues to integrate AI technologies, the success of these implementations will depend largely on the education and empowerment of frontline providers. When interdisciplinary healthcare teams understand how to create and implement AI-augmented clinical pathways, they maintain control over patient care, ensure safety, and prevent reliance on black box solutions that could compromise clinical judgment.

The Technical Advantage: This educational approach creates a powerful feedback loop that addresses the transparency challenges identified in the research. As providers learn to create better clinical pathways, these pathways generate higher-quality training data for supervised non-parametric learning algorithms. These algorithms, in turn, can support more sophisticated AI agentic workflows that assist rather than replace clinical judgment. The result is a transparent, explainable AI system that learns from real clinical practice and supports providers in making better decisions—exactly the kind of transparent AI deployment that the research calls for.

The future of healthcare AI isn’t about replacing human expertise with mysterious algorithms—it’s about empowering healthcare providers with the knowledge and tools they need to create AI systems that learn from their expertise and support their clinical judgment. Educational platforms like CarePathIQ are making this future possible by ensuring that all healthcare providers have access to the education they need to be active participants in the AI revolution, creating pathways that inform intelligent algorithms and support agentic workflows that enhance rather than replace human clinical expertise.