Weekly Evidence Roundup · October 17, 2025

The Hidden Bias in Ambient AI: How English-Dominant Training Data Could Harm 67 Million Americans

What if the ambient AI systems designed to improve healthcare actually perpetuate the very inequities they're meant to solve? A recent perspective piece in the BMJ highlights a critical but often overlooked risk in healthcare AI: the unchecked diffusion of ambient AI systems (including AI scribes, v

What if the ambient AI systems designed to improve healthcare actually perpetuate the very inequities they’re meant to solve? A recent perspective piece in the BMJ highlights a critical but often overlooked risk in healthcare AI: the unchecked diffusion of ambient AI systems (including AI scribes, voice assistants, and automated documentation tools) without proper regulatory oversight or community input. While the perspective focuses on global health equity, the same patterns of digital inequity could affect patients right here in the United States—particularly the 67 million Americans who speak languages other than English at home and already face significant barriers to quality healthcare.

The perspective, authored by Kynthia Ravikumar from Imperial College London, identifies a troubling pattern that extends far beyond ambient AI: the risk of “digital colonialism” where technologies designed in high-income contexts are widely introduced into different linguistic, legal, and clinical ecosystems, often with harmful consequences. While this perspective focuses on global contexts, there’s a concerning potential for similar issues in our own healthcare system, where ambient AI systems trained predominantly on English-language data from English-speaking populations could be deployed to serve patients with limited English proficiency, potentially creating barriers to care and perpetuating health inequities.

The Hidden Bias: How Ambient AI Systems Could Fail 67 Million Americans

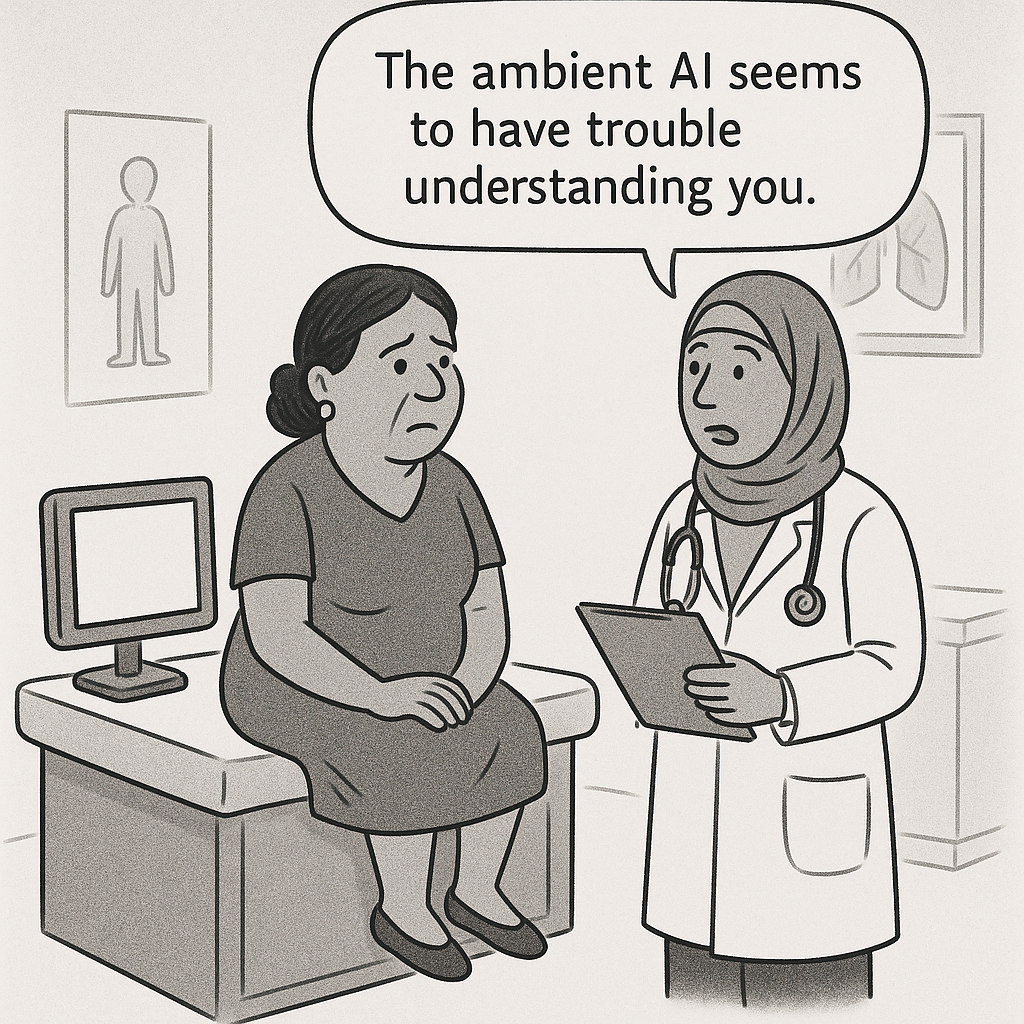

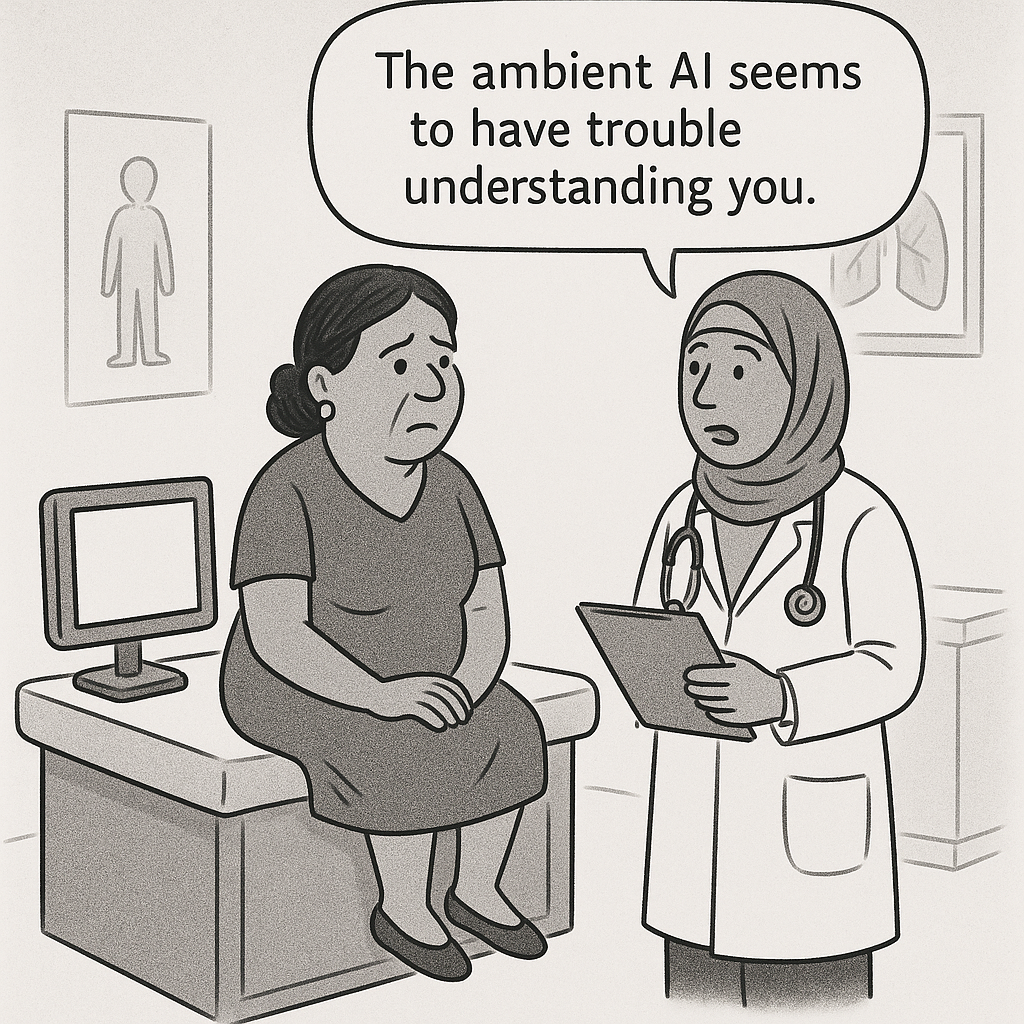

Consider Maria, a Spanish-speaking patient with diabetes who visits her healthcare provider. The ambient AI system in the exam room—designed to listen, transcribe, and document the conversation—might struggle to understand her accented English and medical terminology in Spanish, potentially leading to incomplete or inaccurate documentation. The AI scribe could miss critical details about her symptoms, misinterpret her responses to questions, or fail to capture the nuances of her concerns. Similarly, AI-augmented care pathways trained on data from predominantly English-speaking patients might recommend treatments and follow-up instructions that don’t account for Maria’s language needs or cultural context around diabetes management. The result? Maria leaves the appointment confused about her care plan, potentially leading to poor medication adherence and worsening health outcomes.

This scenario represents a significant concern for the 67 million Americans who speak languages other than English at home. The current approach to ambient AI development often follows a dangerous pattern: these systems are typically trained predominantly on English-language data from English-speaking populations, making them potentially less effective for patients with limited English proficiency, different cultural contexts, or non-standard communication patterns. The “hidden bias” lies in the assumption that English-dominant training data will work equally well for all patients—an assumption that could prove costly for both patients and healthcare systems.

The perspective highlights a crucial distinction: while clinicians make idiosyncratic errors, algorithmic bias, if present, would be systematized and replicated at scale. For patients with limited English proficiency, this means that AI tools trained on limited data could potentially fail systematically, creating barriers to care that compound existing health inequities. The same concern applies to AI-augmented care pathways that are built using data from English-speaking, predominantly white populations and then deployed to serve diverse patient populations with different needs, languages, and cultural contexts.

The Hidden Bias in Action: How Ambient AI Systems Could Systematically Fail

The risks identified in the perspective highlight important concerns that could affect healthcare systems across the United States. For the 67 million Americans who speak languages other than English at home, ambient AI systems trained on English-dominant data could potentially create multiple layers of harm:

Documentation Failures: Ambient AI systems (including AI scribes) trained on English-dominant data might struggle with accented English, medical terminology in other languages, or cultural communication patterns, potentially creating incomplete or inaccurate medical records that could lead to misdiagnoses and inappropriate treatments.

Voice Recognition Bias: AI voice assistants and transcription tools might consistently mishear or misinterpret patients with accents, leading to systematic documentation errors that compound over time and create a distorted medical record.

Cultural Communication Gaps: Ambient AI systems might miss culturally-specific ways of describing symptoms, pain, or concerns that don’t align with English medical terminology, leading to incomplete understanding of patient needs.

Consent and Autonomy: When ambient AI systems can’t communicate effectively with patients in their preferred language or cultural context, patients might not be able to provide truly informed consent for AI involvement in their care, potentially violating their autonomy and rights.

This is particularly concerning for AI-augmented care pathways, which are meant to guide clinical decision-making. When these pathways are built using data from English-speaking, predominantly white populations and then deployed to serve patients with limited English proficiency or different cultural contexts, they could potentially harm rather than help patient care. The pathways might recommend treatments that don’t account for language barriers, use terminology that doesn’t resonate with patients, or fail to consider cultural factors that significantly affect health outcomes and treatment adherence.

The Solution: Building Ambient AI Systems That Serve All Patients

The solution isn’t to halt innovation but to embed health equity into ambient AI governance from the very beginning. This means democratizing not just access to ambient AI tools, but the entire development process to ensure that these systems are designed to serve the needs of all patients, including the 67 million Americans who speak languages other than English at home. Educational platforms like CarePathIQ are leading the way in this approach by providing free, accessible training that enables healthcare providers from diverse backgrounds to create AI-augmented care pathways that serve their specific patient populations.

Building With, Not Just For Patients

The perspective calls for partnerships that center patients and communities in ambient AI design, training, and monitoring. This approach ensures that patients are empowered to consent meaningfully to AI involvement in their care, regardless of their language proficiency or cultural background. For ambient AI systems, this means:

Patient-Centered Design: Involving patients with limited English proficiency, their families, and community leaders in ambient AI system development

Multilingual Training Data: Using data that reflects the linguistic diversity of the patient population, including accented English, medical terminology in multiple languages, and cultural communication patterns

Patient Validation: Testing ambient AI systems with patients from diverse linguistic and cultural backgrounds before deployment

Ongoing Patient Input: Creating feedback loops that allow patients and their communities to shape and improve ambient AI systems over time

Language Access: Ensuring AI tools can communicate effectively in patients’ preferred languages and cultural contexts

Addressing the Regulatory Gap in the United States

The research highlights a crucial gap that extends beyond global health: the United States lacks comprehensive AI governance frameworks that specifically address language access and cultural competency in healthcare AI. Current FDA guidance on AI medical devices doesn’t adequately address the needs of patients with limited English proficiency, allowing AI tools to be deployed without proper validation for diverse patient populations.

This creates a two-tiered safety standard where AI tools are validated primarily with English-speaking patients but then deployed to serve all patients, including those with limited English proficiency who may be most vulnerable to AI-related harms. The solution requires developing transparent evaluation protocols that specifically test AI tools with diverse patient populations, including those with limited English proficiency, different cultural backgrounds, and varying health literacy levels.

Healthcare organizations, regulatory bodies, and AI developers must work together to create frameworks that ensure AI tools are safe and effective for all patients, not just those who speak English fluently or come from the cultural contexts in which the tools were developed.

CarePathIQ: A Model for Patient-Centered AI Development

Educational platforms like CarePathIQ represent a crucial step toward democratizing AI development in healthcare. By providing free, comprehensive training on creating AI-augmented care pathways, CarePathIQ enables healthcare providers from diverse backgrounds to build tools that serve their specific patient populations, including those with limited English proficiency, rather than relying on generalized solutions.

This approach addresses the core issues identified in the research and the specific challenges facing patients with limited English proficiency:

Patient-Centered Expertise: Empowers healthcare providers to create pathways that reflect their experience serving diverse patient populations

Language and Cultural Sensitivity: Enables pathways that account for multiple languages, cultural contexts, and communication patterns

Patient Ownership: Gives patients and their communities control over the AI tools that affect their care

Transparent Development: Ensures that pathways are built using methods that providers can understand and validate with their patients

Accessible Training: Provides free education that enables providers in underserved communities to develop AI tools tailored to their patients’ needs

The Path Forward: Equity-First Ambient AI Development for All Patients

As ambient AI systems (including AI scribes, voice assistants, and automated documentation tools) gain medical device status, the potential risk of perpetuating systemic biases should be considered in deployment. But the solution goes beyond deployment—it requires reimagining how we develop these tools in the first place to ensure they serve all patients, including the 67 million Americans who speak languages other than English at home.

The perspective concludes with a powerful statement: “Equity must not be an afterthought.” This applies not just to AI deployment, but to the entire development process. We need AI-augmented care pathways that are built with and for all patients, not just deployed to them.

This means:

Inclusive Development Teams: Ensuring that AI development teams include patients with limited English proficiency, their families, and representatives from diverse communities

Diverse Training Data: Using data that reflects the full spectrum of patient populations, including accented English, medical terminology in multiple languages, and cultural communication patterns

Patient Validation: Testing AI tools with patients from diverse linguistic and cultural backgrounds before widespread deployment

Ongoing Patient Input: Creating mechanisms for patients and their communities to shape and improve AI tools over time

Transparent Processes: Making AI development processes accessible and understandable to patients and their advocates

Language Access Standards: Establishing clear requirements for AI tools to function effectively in multiple languages and cultural contexts

Building a More Equitable Future for Ambient AI

The perspective on ambient AI systems and digital colonialism reveals a fundamental truth: technology is not neutral. The way we build ambient AI systems reflects the values, assumptions, and priorities of those who create them. If we want ambient AI systems that truly serve all communities, we must ensure that all communities have a voice in their creation.

Educational platforms like CarePathIQ are showing the way forward by democratizing not just access to ambient AI tools, but the knowledge and skills needed to build them. This approach ensures that ambient AI systems are truly patient-centered and evidence-based, serving the specific needs of the populations under consideration rather than relying on generalized black box AI solutions.

The future of healthcare AI isn’t about deploying ambient AI tools built for privileged populations to everyone else—it’s about empowering all communities to build the tools they need to serve their patients effectively. This is how we can prevent digital colonialism and build a more equitable future for healthcare AI, where ambient AI systems are designed to truly serve all patients, regardless of their language proficiency or cultural background.